This article has been published in German in the Red Stack Magazine and in English in the PTK Magazine.

The lament in the headline might be very familiar to most developers and even more painfully to quite a few users. “What happened between yesterday and today to cause my application to break? And why did it have to be reported by my user?”

After some contemplation, we remember a small change we made yesterday, shortly before leaving for home. The customer asked for a new column in the View v_starwars_ characters which we added on the fly. Nothing special, but very urgent, and it seemed so simple: just a new field with information about the movies in which the Star Wars characters appear:

| ID | NAME | EPISODES (new) |

|---|---|---|

| 1 | Darth Vader | 3,4,5,6 |

| 2 | Luke Skywalker | 4,5,6,7,8,9 |

| 3 | Rey | 7,8,9 |

This is a very simple create or replace view. Of course we tested afterwards via select if the new column shows the expected results.

But if we now try to change the name of a character, we get the following database error:

„ORA-01733: virtual column not allowed here”

Maybe you already guessed it, maybe you experienced this special behaviour of the Oracle database yourself: whenever you replace a view, the belonging instead-of trigger is lost. A small, simple change with big and annoying consequences.

How could we have prevented this error?

„Better testing“ is an obvious answer and it won’t take long until we get to checklists and standardized test protocols to be sure that every important aspect of our application is tested, after every change.

That’s the point where automated self-tests become interesting, because they are exactly that: a number of narrowly defined, standardized checks of a certain functionality – for example, whether updating a specific column of a view is possible.

We can create such a test with straight standard PL/SQL like this:

declare

l_name varchar2(2000);

begin

update v_starwars_characters set name = 'Anakin Skywalker' where id = 1;

select name into l_name

from v_starwars_characters where id = 1;

if ( l_name <> 'Anakin Skywalker') then

raise_application_error(-20000, 'Update did not work!');

end if;

end;

We could now save this block as a script and run it after every update. However, this would become pretty confusing and tedious with a growing number of tests, which is why it’s helpful to use utPLSQL. The free, actively maintained open source framework can take a lot of work out of our hands.

Installing utPLSQL

To use utPLSQL it has to be installed into the database first.

To do this you can download the latest release version from GitHub. You can find some prepared scripts in the folder „source“ to smoothly install utPLSQL:

„install_headlesss.sql“to do a default-installation into schema „ut3“ with public synonyms (run as sys-user)„install.sql“to install it into a different schema (no sys-user needed). In that case you need to grant access to the utPLSQL functions by running one of the following scripts:„create_synonyms_and_grants_for_public.sql“for all users

„create_user_grants.sql“and„create_user_synonyms.sql“for a specific user

A detailed installation guideline can be found at http://utplsql.org/utPLSQL/latest/userguide/install.html or in folder docs/userguide/install.html of the downloaded ZIP archive.

A first test with utPLSQL

While most PL/SQL developers are used to the procedural model and rely heavily on packages, utPLSQL uses the object-oriented possibilities of the Oracle database and provides a “fluent” API. It might look odd at first, but you will get used to it quickly and probably come to love it.

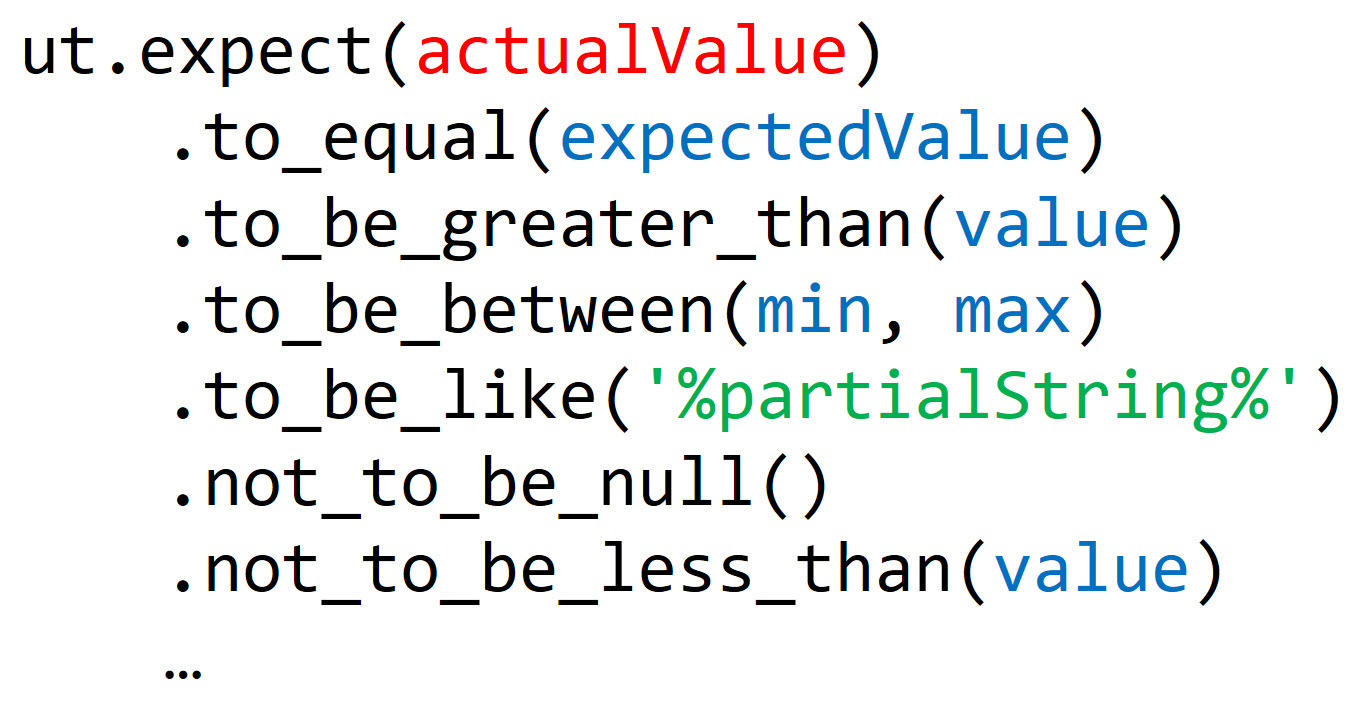

utPLSQL provides some very helpful public methods after its installation, first of all the so-called “expectations”:

The second key part of the framework is the „annotations” with which we can turn regular PL/SQL packages into test-suites.

This is a utPLSQL test-suite header with annotations %suite and %test – these are getting parsed and interpreted by the framework, but only in the package-headers (note that the blank line between both annotations is necessary):

create or replace package ut_v_starwars_characters as

-- %suite(View: V_STARWARS_CHARACTERS)

-- %test(Update character-name via view)

procedure update_name;

end;

Here, %suite is the only annotation that is absolutely necessary. It tells utPLSQL, that the package is in fact a test-suite. With %test we mark the subsequent procedure as a test.

Note that the blank line between both annotations is necessary to tell the parser which annotations belong the the suite-level and which belong to the test-level.

We can now implement the test inside the package body as usual:

create or replace package body ut_v_starwars_characters as

procedure update_name

as

l_actual_name v_starwars_characters.name%type;

begin

-- Arrange: Setup test-data

insert into star_wars_characters (id, name) values (-1, 'Test-Char');

-- Act: Do the actual update

update v_starwars_characters set name = 'Darth utPLSQL' where id = -1;

-- Assert: Check the output

select name into l_actual_name from v_starwars_characters where id = -1;

ut.expect(l_actual_name).to_equal('Darth utPLSQL');

end;

end;

In a similar way to the standard PL/SQL example, we execute an update on the view. Afterwards we select the column we just updated and compare the result with our expected value via utPLSQL-expectation.

One problem we face in a test scenario is, that we don’t know exactly which data might exist in our current database state. Therefore, we initially make sure we have data to update.

In our example, we use a little trick to avoid collisions with eventually existing data: most primary keys are INTEGERs, but the related Sequence or Identity start with 1, so we can just use negative IDs for our test data.

Now we can run the test with the ut.run-method:

set serveroutput on

call ut.run('ut_v_starwars_characters');

View: V_STARWARS_CHARACTERS

Update character-name via view [,002 sec] (FAILED - 1)

Failures:

1) update_name

ORA-01732: Data manipulation operation not legal on this view

ORA-06512: in "SITHDB.UT_V_STARWARS_CHARACTERS", line 10

ORA-06512: in line 6

Finished in ,002346 seconds

1 tests, 0 failed, 1 errored, 0 disabled, 0 warning(s)

As expected the test fails and utPLSQL even provides the complete stack trace.

It’s also noteworthy that we don’t get a „failed“ but an „errored“ test in the final test run summary. The test caused an ORA-exception, which the framework caught and documented for us. If we had more tests, those would nonetheless be run.

Now we can address the essential problem and re-create the lost instead-of trigger (for demonstration without any functionality first):

create or replace trigger save_v_starwars_characters

instead of update on v_starwars_characters

for each row

begin

null;

end;

If we now re-run our test-suite, we get a slightly different result:

call ut.run();

View: V_STARWARS_CHARACTERS

Update character-name via view [,409 sec] (FAILED - 1)

Failures:

1) update_name

Actual: 'Test-Char' (varchar2) was expected to equal: 'Darth utPLSQL' (varchar2)

at "SITHDB.UT_V_STARWARS_CHARACTERS.UPDATE_NAME", line 14 ut.expect(l_actual_name).to_equal('Darth utPLSQL');

Finished in ,41099 seconds

1 tests, 1 failed, 0 errored, 0 disabled, 0 warning(s)

The test is still failing, but this time it’s our expectation that caused the failure and not an ORA-exception. The failure report also tells us very specifically what went wrong and in which line the expectation failed.

Other than the so-called “fail-fast” frameworks, utPLSQL computes all expectations of a test and does not immediately exit the test at the first failure. All failures are collected and printed sequencially in the failure report.

Now we implement the trigger completely and run our test-suite again:

create or replace trigger save_v_starwars_characters

instead of update on v_starwars_characters

for each row

begin

update star_wars_characters

set name = :new.name

where id = :new.id;

end;

/

call ut.run();

View: V_STARWARS_CHARACTERS

Update character-name via view [,004 sec]

Finished in ,005951 seconds

1 tests, 0 failed, 0 errored, 0 disabled, 0 warning(s)

Our view works as expected – and the next time we lose the trigger, we will notice it.

Benefits of automated tests

As you’re reading this article, the probability is very high that you don’t need to be convinced about the advantages of automated tests. But I still want to list some of the benefits automated tests provide in various ways:

- Automated tests are „change detectors“ which warn us about changed functionality (no matter if these changes are intended or – like in our example – unintended)

- They are transportable and can be run (almost costless) on different systems

- If they are implemented to confirm bugfixes, they prevent known errors from reappearing

- They can serve as a confirmation for agreed-upon requirements of the software

These benefits are valid for all kinds of automated tests. When we assume tests that are written by the developers themselves like in this example, there are even some more benefits:

- The creation of tests can help to shift the focus from the how to the what and to look at a functionality from different perspectives

- Well-written tests can serve as code example and documentation for how to use a certain functionality or what it is meant to do

- Self-tests encourage to create „simpler“ programming constructs, because they are easier to test. This has a positive impact on the maintainability of the code

From my point of view, the most important advantage of a solid, automated test base, however, is that it creates the preconditions to improve our own code continuously and confidently in order to do continuous refactoring.

Another test and more annotations

We already secured one functionality of our view, but the new column, in particular, contains logic of significant complexity. That makes it worthwhile securing the behaviour via an automated self-test – especially if we expect the functionality or implementation to change in future.

We define another test and also use a new utPLSQL annotation:

create or replace package ut_v_starwars_characters as

-- %suite(View: V_STARWARS_CHARACTERS)

-- %beforeall

procedure setup_test_data;

-- %test(Update character-name via view)

procedure update_name;

-- %test(View returns correct list of episodes)

procedure return_list_of_episodes;

end;

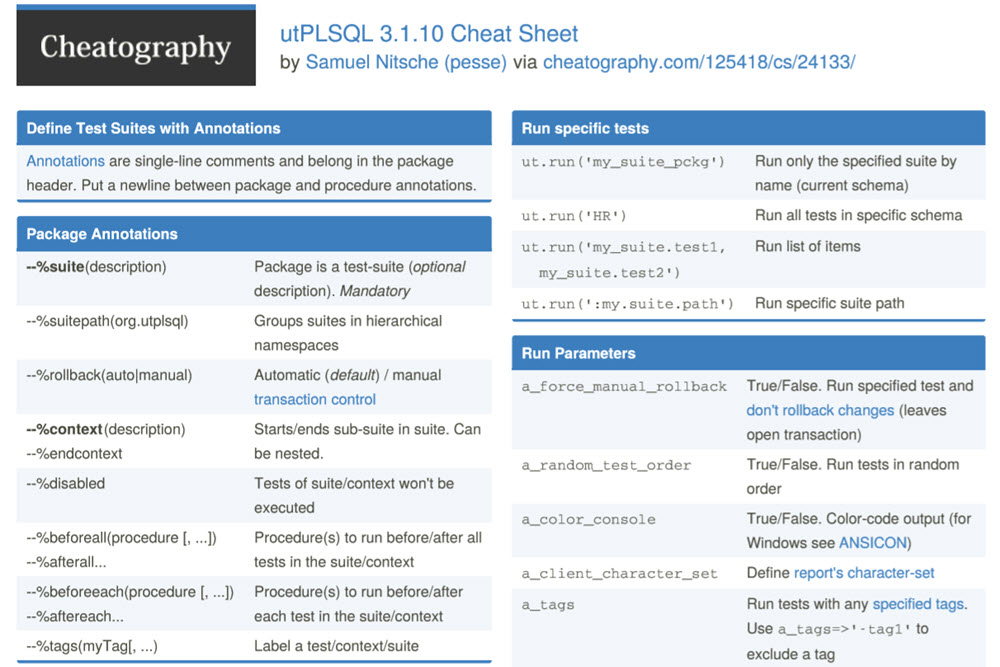

utPLSQL provides a variety of annotations which allow to decouple setup and cleanup from the actual test:

- %beforeall

- %beforeeach

- %beforetest

- %aftertest

- %aftereach

- %afterall

The procedure following the %beforeall-annotation is run once per test-suite, before all of its tests.

The benefit of this annotation is revealed when we look at the implementation:

create or replace package body ut_v_starwars_characters as

function get_view_row

return v_starwars_characters%rowtype

as

l_result v_starwars_characters%rowtype;

begin

select * into l_result

from v_starwars_characters

where id = -1;

return l_result;

end;

procedure setup_test_data

as

begin

insert into star_wars_characters (id, name) values (-1, 'Test-Char');

insert into appearance_in_episode (character_fk, episode_no)

values ( -1, 3 );

insert into appearance_in_episode (character_fk, episode_no)

values ( -1, 5 );

end;

procedure update_name

as

begin

update v_starwars_characters set name = 'Darth utPLSQL' where id = -1;

ut.expect(get_view_row().name)

.to_equal('Darth utPLSQL');

end;

procedure return_list_of_episodes

as

begin

ut.expect(get_view_row().episodes)

.to_equal('3,5');

end;

end;

Now setup_test_data creates the situation we need to execute both tests: one entry in the table star_wars_characters and two entries in the table appearance_in_episode. These tables are the source of the view we want to test.

The tests themselves are pretty easy to read and understand – to increase the readability we added the helper function get_view_row() which returns the complete view-row. To do the check we once again use the to_equal-expectation.

Maybe you’ve been wonderin for a while now what happens to the test data we create?

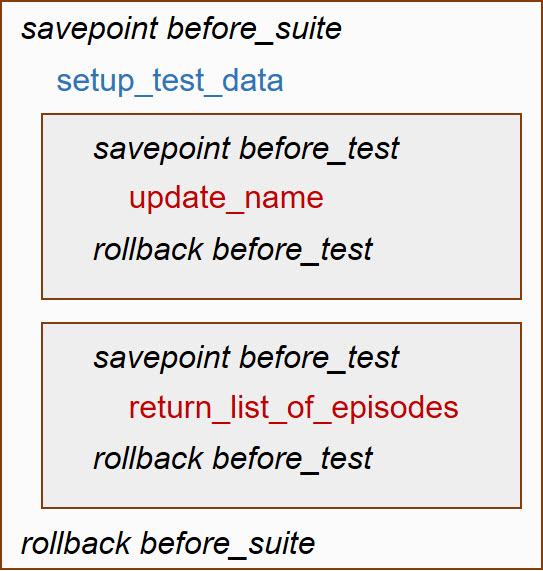

utPLSQL works with savepoints and rollbacks when operating in standard mode. Before each suite and before each test a savepoint is created to which the session is rolled back after finishing the test or suite. That means that all data changes we do, including our test data, are automatically undone at the end of a test run.

It also means that we can’t test functions in this mode which contain transaction control like commit and rollback or DDL.

To secure these scenarios with utPLSQL-tests we add the annotation %rollback(manual) right below %suite. Of course, we need to take care to clean up any changes ourselves now (for example via an %afterall method).

For all situations that don’t require transaction control or DDL, however, the rollback-mechanism provides a huge simplification of cleanup.

Securing edge-cases

What we tested and secured so far are the ways in which we expect our application to be normally used. But what happens if we have a Star Wars character who doesn’t appear in any of the movies – for example the quite popular Ahsoka Tano from the „Clone Wars“ series?

It’s important to think beyond the usual use-cases, beyond what we expect or want the user to do. The chances are high that users will use our application in ways we wouldn’t normally expect.

Let’s write another test for the characters without a movie appearance then:

-- Package-Header

…

-- %test(View returns row but empty list of episodes when character has no appearance)

procedure return_empty_list_of_episodes;

…

-- Package-Body

…

procedure return_empty_list_of_episodes

as

begin

delete from appearance_in_episode where character_fk = -1;

ut.expect(get_view_row().episodes)

.to_be_null();

end;

…

If this test is run successfully, we proved two things at once:

- Even if a Character doesn’t appear in the movies, the view returns a row (otherwise a

NO_DATA_FOUND-Exception would be thrown) - The value of column

EPISODESis NULL in that case.

We could now go on and test whether the view-row still returns the expected name and not NULL.

This shows a dilemma we face when writing automated self-tests.

How many tests are enough?

How far should we go with testing? Should we secure every little eventuality with a test – in our case, for example, that inserts are not allowed or that the name cannot be changed to a name that already exists?

This is something only you can answer, because only you know the circumstances and risks of your project and your database application. However, the following questions can be helpful when making a decision:

- How severe are the consequences when a certain functionality doesn’t behave as expected?

- How likely is it that a certain case happens (e.g. a table-constraint is removed by accident and therefore multiple entries with the same name would become possible)?

- How likely is the code of this functionality to be changed and how often will it happen?

- How difficult or costly is it to test the functionality with an automated self-test?

Software development is often about compromises and it’s exactly the same with creating automated tests. Whether and to what extent they are valuable depends a lot on your goals, your development process, your company and project circumstances.

Even a few tests which secure existing behaviour on a relatively high level can be very beneficial and sufficient for some projects.

If you develop software, which is to be maintained, extended and improved over years, a more detailed base of unit-tests that allows continuous refactoring of your code will provide additional value and improve your development velocity and quality.

My tipp: start small and experiment. Every time an error occurs or gets reported you have to analyze and try to reproduce it. You can usually do this in a way that can be used as a test-setup. When the error is found and fixed, you already have an automated test that prevents this error from happening again.

Every beginning is hard, just keep going! Experiment and observe what helps you. It’s often not the big steps that bring sustainable change, but the small, steady ones that become part of the daily routine.

More information and tools

You can find a lot of information around utPLSQL in the „Resources“-section of utplsql.org.

Meanwhile there is also a comprehensive amount of tools around utPLSQL:

- SQLDeveloper Plugin: https://www.salvis.com/blog/2019/07/06/running-utplsql-tests-in-sql-developer/

- Command-line interface: https://github.com/utPLSQL/utPLSQL-cli

- Maven-Plugin: https://github.com/utPLSQL/utPLSQL-maven-plugin

- Demo-Project incl. CI/CD integration in Travis: https://github.com/utPLSQL/utPLSQL-demo-project

All code example incl. setup can be found here.

0 Comments